In this article we will explain everything you need to know about Apache Avro, an open source big data serialization solution and why you should not do without it.

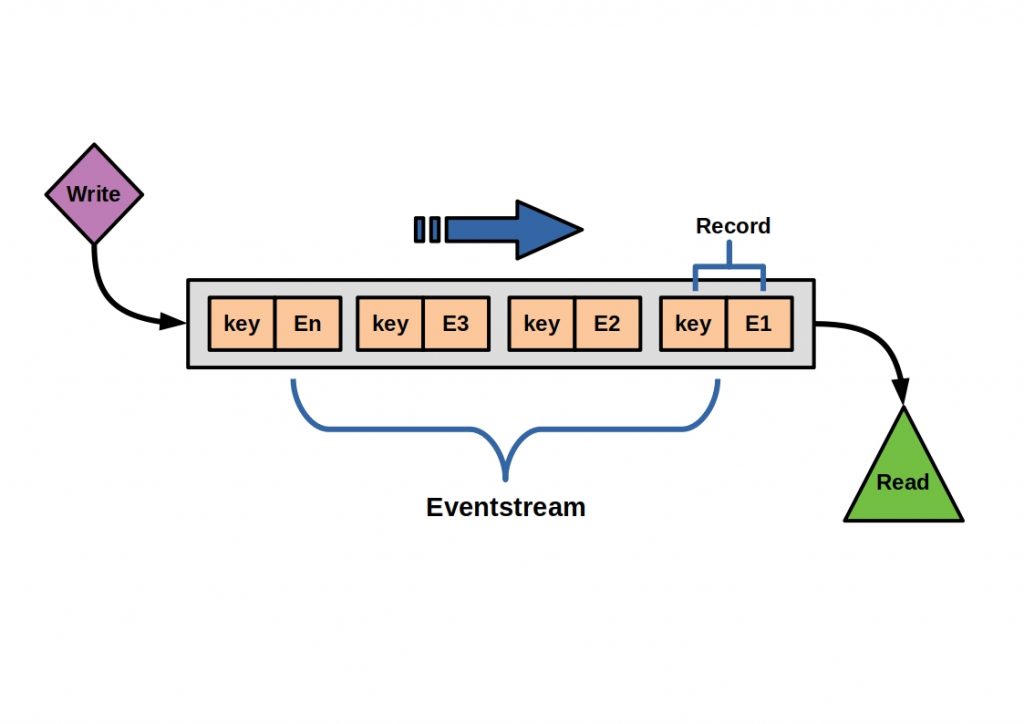

You can serialize data objects, i.e. put them into a sequential representation, in order to store or send them independent of the programming language. The text structure reflects your data hierarchy. Known serialization formats are for example XML and JSON. If you want to know more about both formats, read our articles on the topics. To read, you have to deserialize the text, i.e. convert it back into an object.

In times of Big Data, every computing process must be optimized. Even small computing delays can lead to long delays with a correspondingly large data throughput, and large data formats can block too many resources. The decisive factors are therefore speed and the smallest possible data formats that are stored. Avro is developed by the Apache community and is optimized for Big Data use. It offers you a fast and space-saving open source solution. If you don’t know what Apache means, look here. Here we have summarized everything you need to know about it and introduce you to some other Apache open source projects you should know about.

Apache Avro – Open Source Big Data Serialization Solution

With Apache Avro, you get not only a remote procedure call framework, but also a data serialization framework. So on the one hand you can call functions in other address spaces and on the other hand you can convert data into a more compact binary or text format. This duality gives you some advantages when you have cross-network data pipelines and is justified by its development history.

Avro was released back in 2011 as a part of Apache Hadoop. Here, Avro was supposed to provide a serialization format for data persistence as well as a data transfer format for communication between Hadoop nodes. To provide functionality in a Hadoop cluster, Avro needed to be able to access other address spaces. Due to its ability to serialize large amounts of data, cost-efficiently, Avro can now be used Hadoop-independently.

You can access Avro via special API’s with many common programming languages (Java, C#, C, C++, Python and Ruby). So you can implement it very flexible.

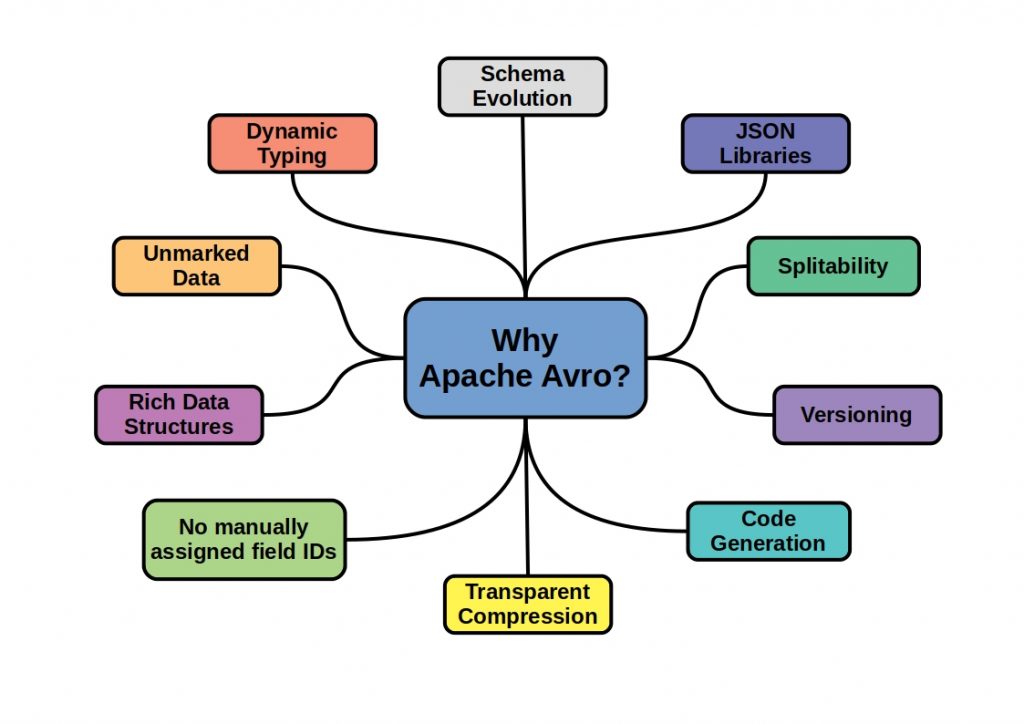

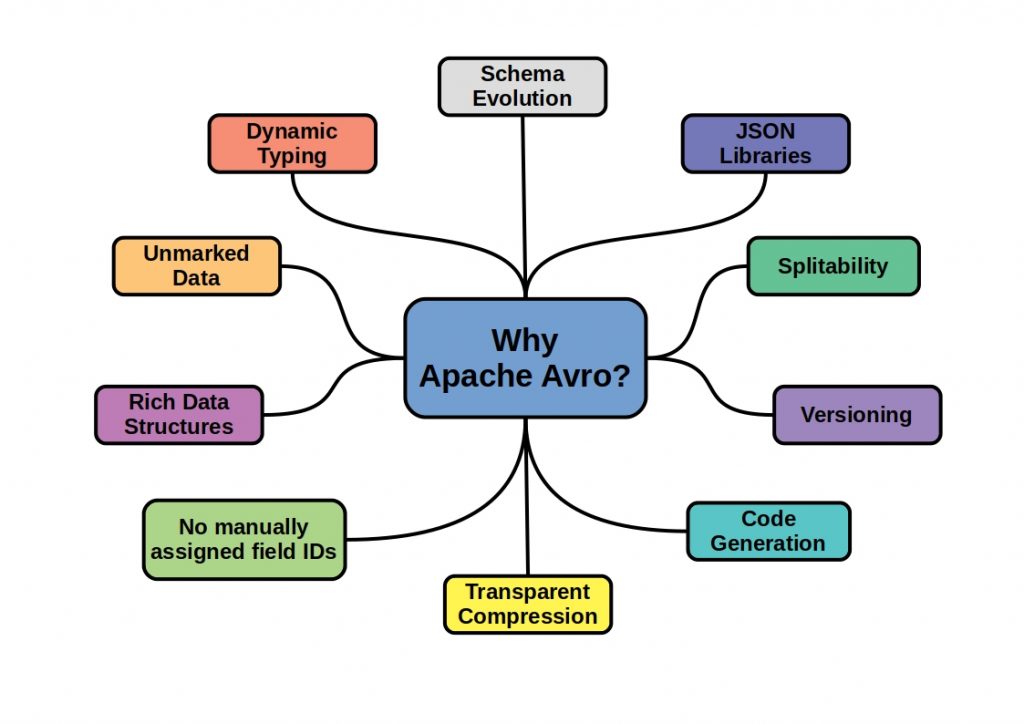

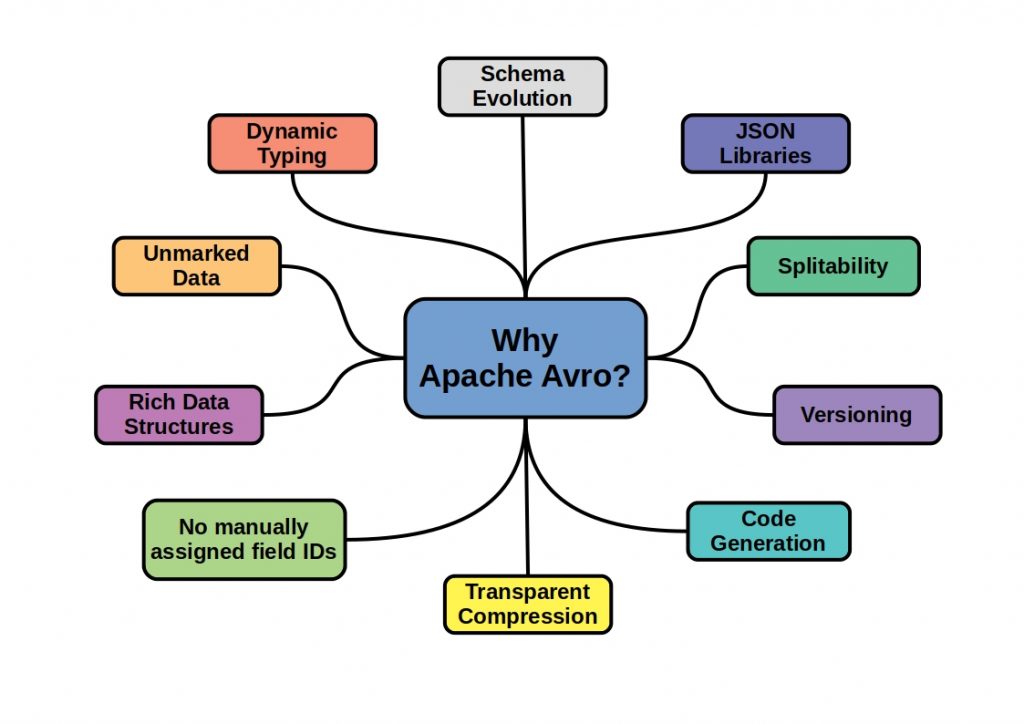

In the following figure we have summarized some reasons what makes the framework so ingenious. But what really makes Avro so fast?

What makes Avro so fast?

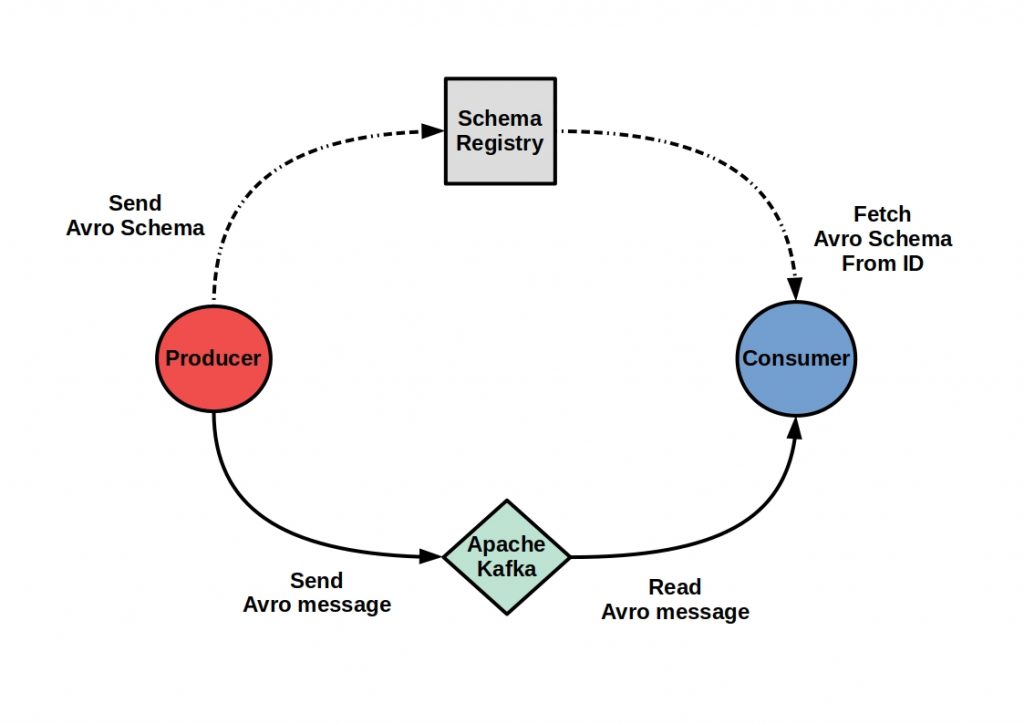

The trick is that a schema is used for serialization and deserialization. About that the data hierarchy, i.e. the metadata, is stored separately in a file. The data types and protocols are defined via a JSON format. These are to be assigned unambiguously by ID to the actual values and can be called for the further data processing constantly. This schema is sent along with the data exchange via RPC calls.

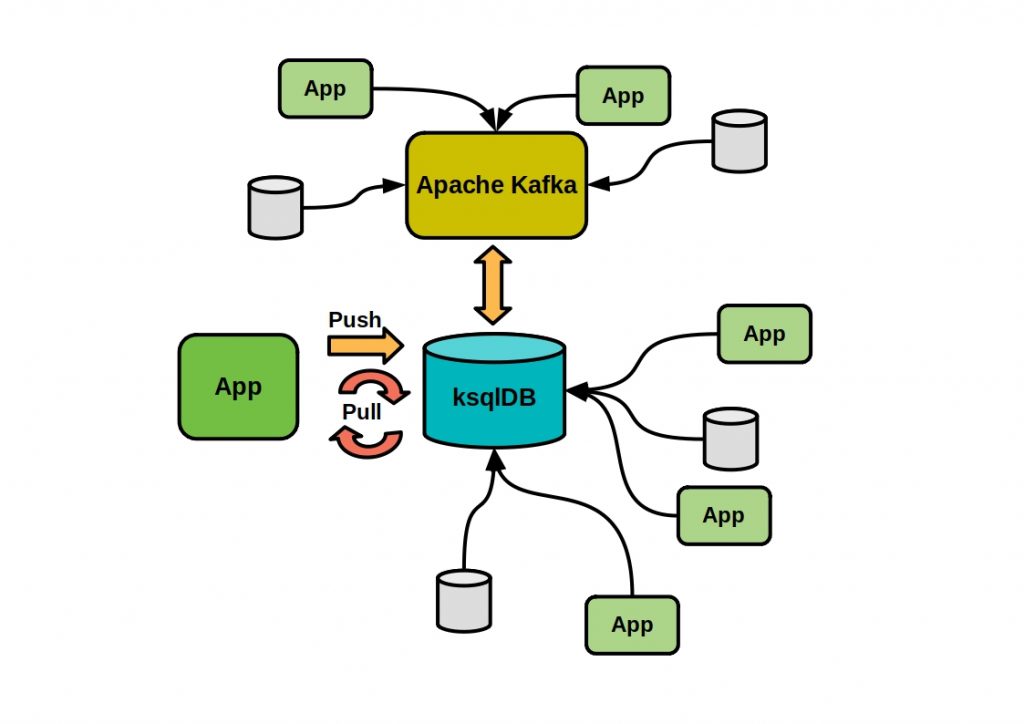

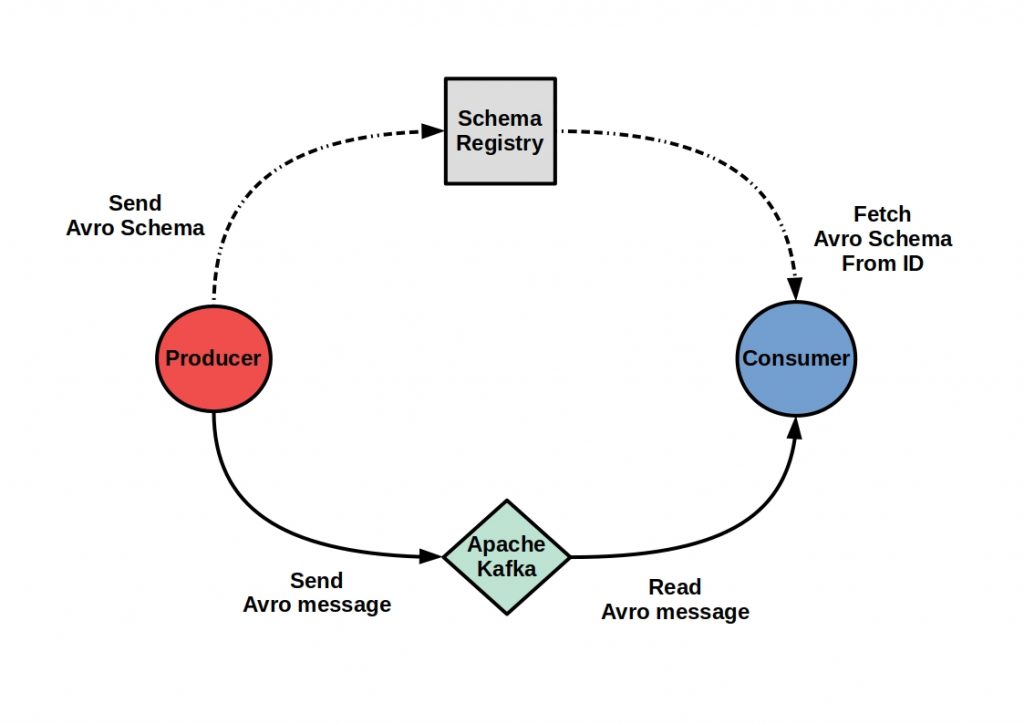

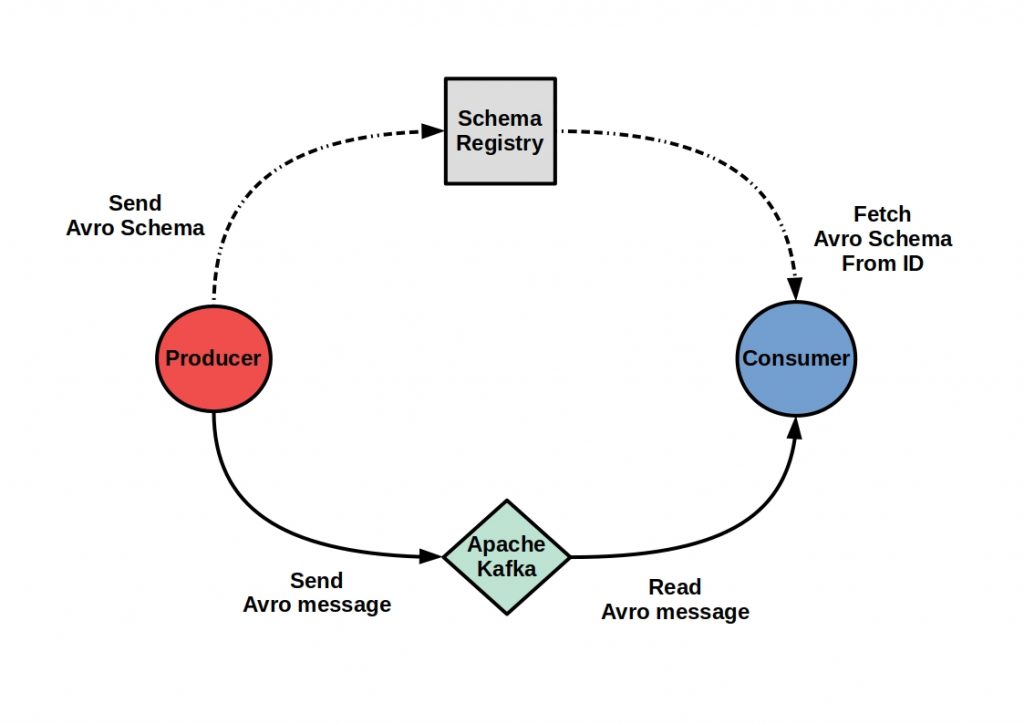

Creating a schema registry is especially useful when processing data streams with Apache Kafka.

Apache Avro and Apache Kafka

Here you can save a lot of performance if you store the metadata separately and call it only when you really need it. In the following figure we have shown you this process schematically.

When you let Avro manage your schema registration, it provides you with comprehensive, flexible and automatic schema development. This means that you can add additional fields and delete fields. Even renaming is allowed within certain limits. At the same time, Avro schema is backward and forward compatible. This means that the schema versions of the Reader and Writer can differ. Schema registration management solutions exist, with Google Protocol Buffers and Apache Thrift, among others. However, the JSON data structure makes Avro the most popular choice.