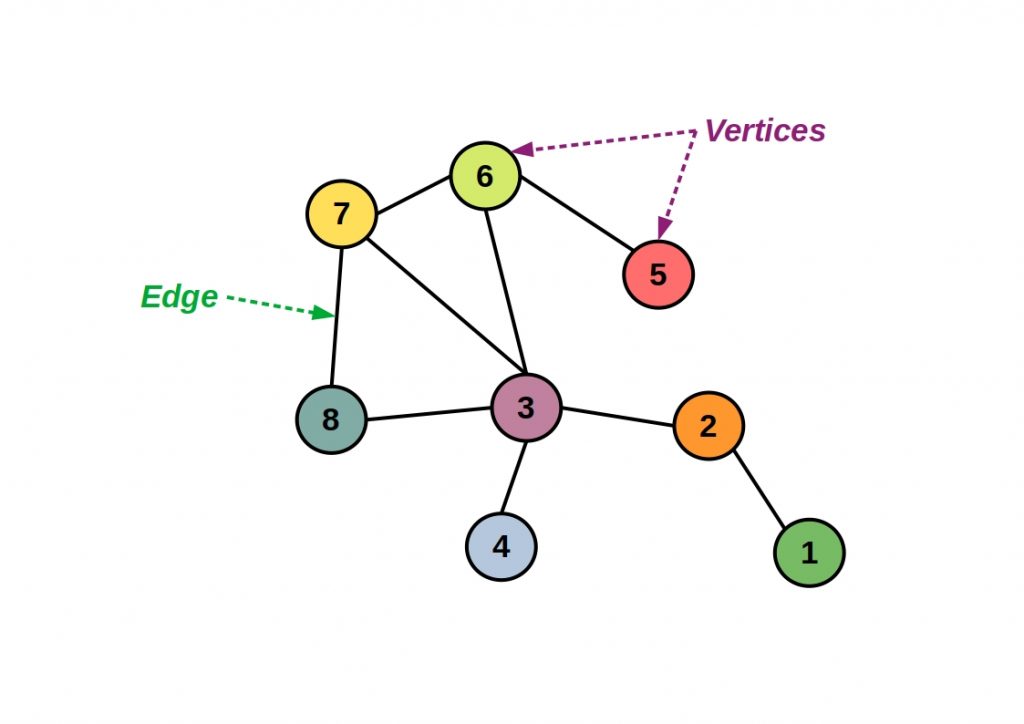

PyTorch BigGraph – The graph is a data structure that can be used to clearly represent relationships between data objects as nodes and edges.

These structures can contain billions of nodes and edges in an industrial context.

So how can the multidimensional data relationships be accessed in a meaningful way?

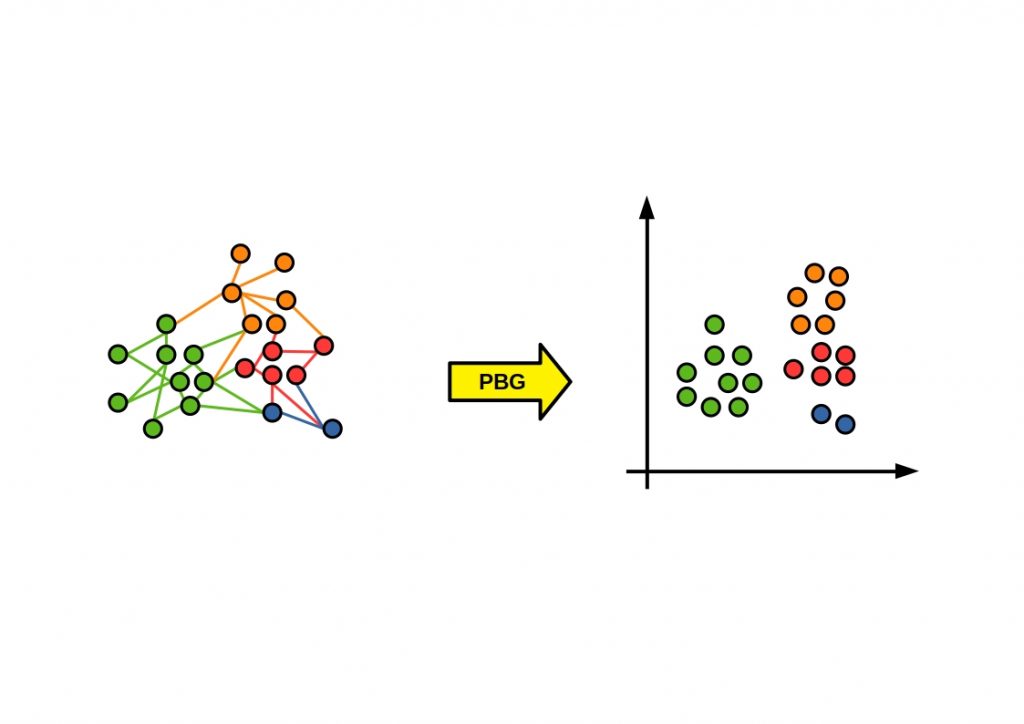

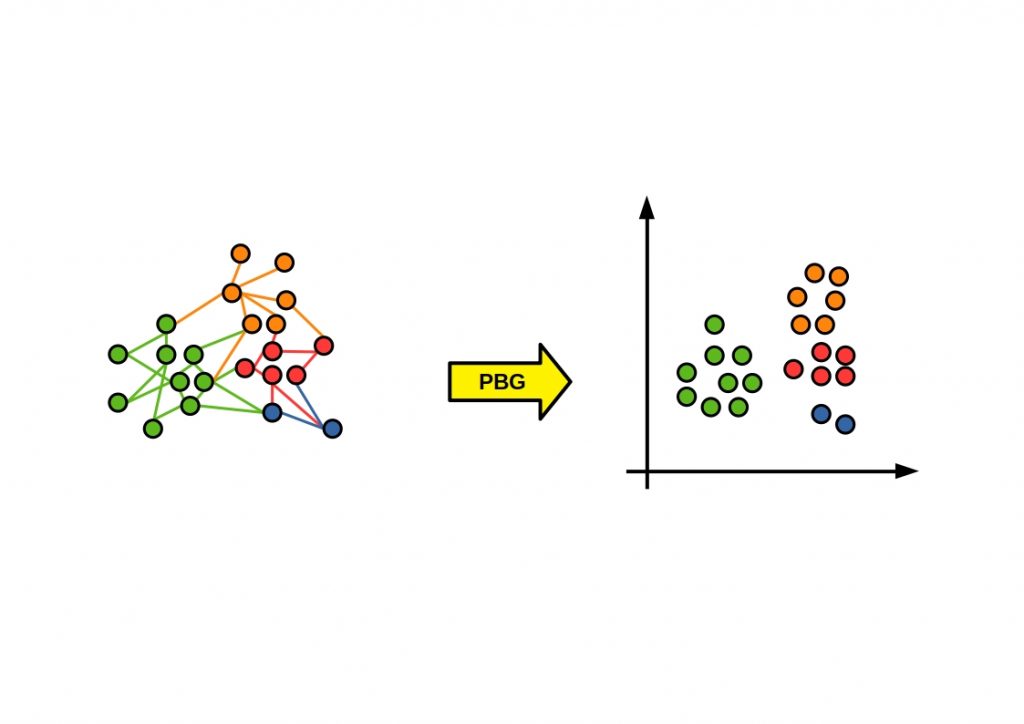

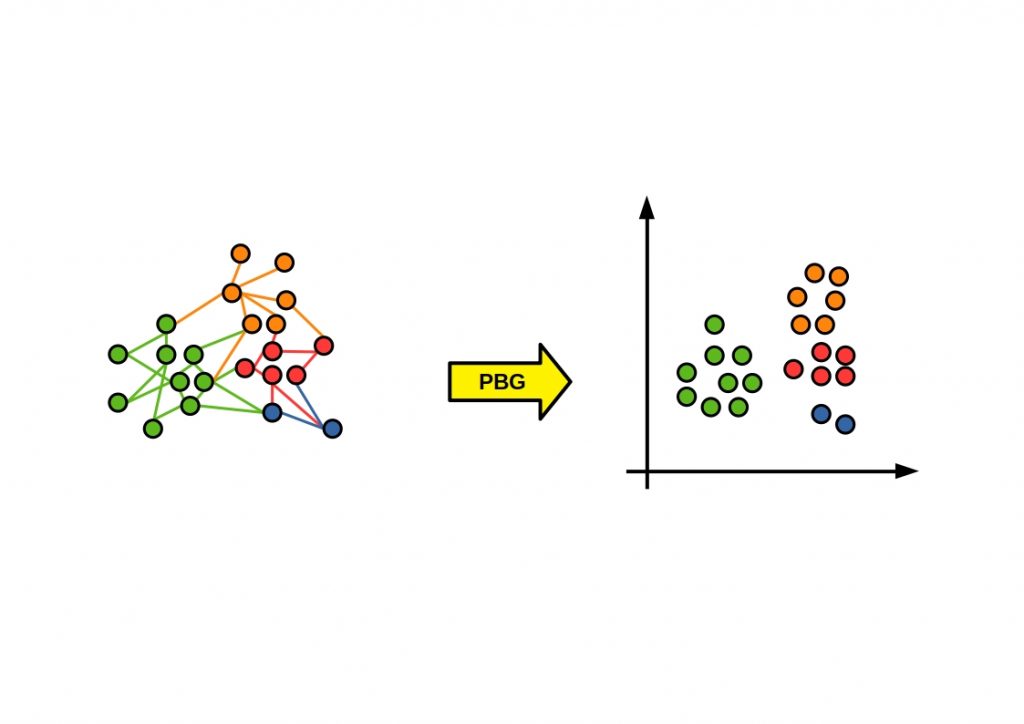

Graph embedding offers one possibility for dimension reduction.

This is a sequence of different algorithms with the goal of reducing the graph’s property relations to vector spaces. These embedding methods usually run unsupervised.

If there is a large property similarity, two points should also be close to each other in the vector space.

The reduced feature information can then be further processed with additional machine learning algorithms.

Table of Contents

What is PyTorch BigGraph?

Facebook offers PyTorch BigGraph, an open source library that can be used to create very performant graph embeddings for extremely large graphs.

It is a distributed system that can unsupervised learn graph embeddings for graphs with billions of nodes and trillions of edges. It was launched in 2019 and is written entirely in Python. This ensures absolute compatibility with common Python data processing libraries, such as NumPy, Pandas, and scikit-learn.

All calculations are performed on the CPU, which should play a decisive role in the hardware selection. A lot of memory is mandatory. It should also be noted that PBG can process very performant large graphs, but is not optimized for small graphs, i.e. structures with less than 100.000 nodes.

Facebook extends the ecosystem of its popular Python scientific computing package PyTorch with a very performant Big Graph solution. If you want to know more about PyTorch, you should read this article from us. Here we will show you the most important features and compare it with the industry’s top performer Google Tensorflow.

Fundamental building blocks

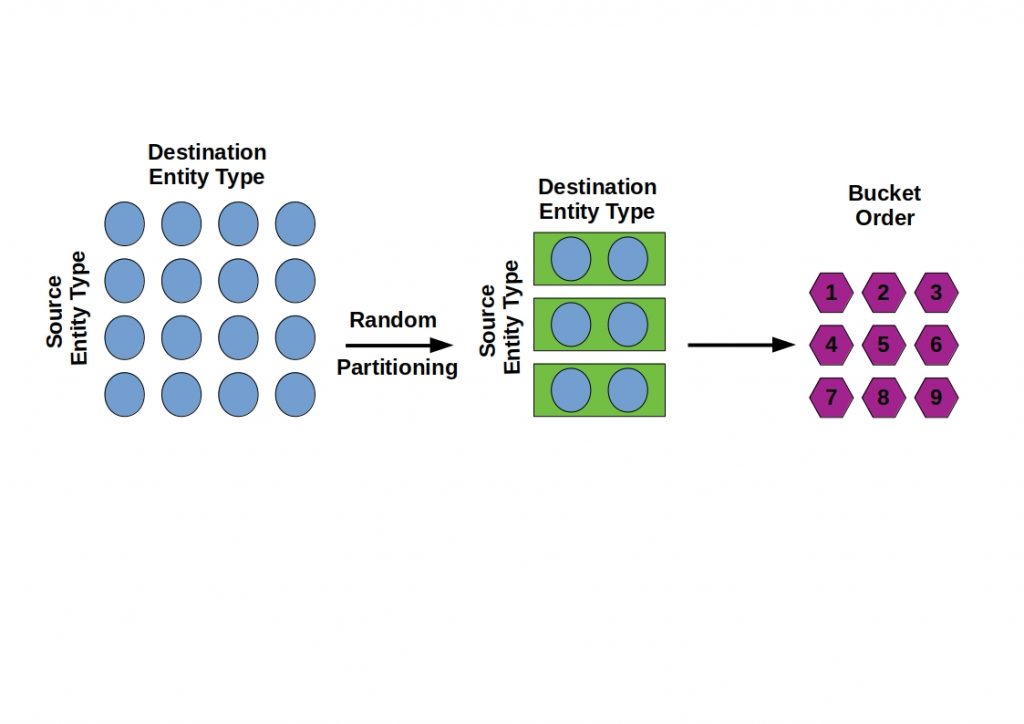

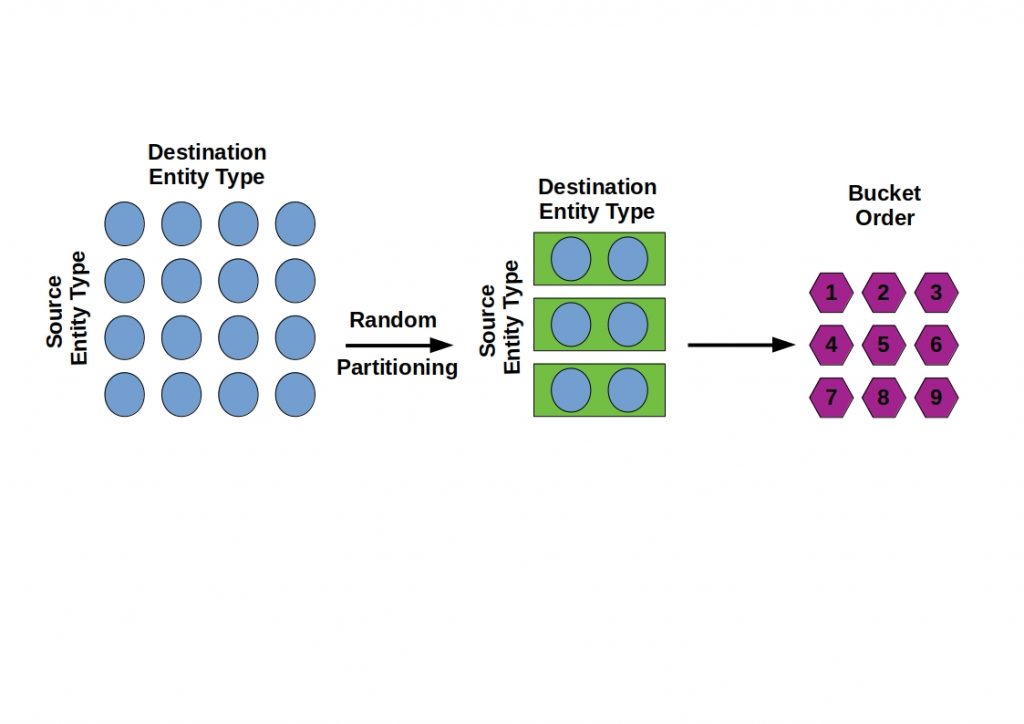

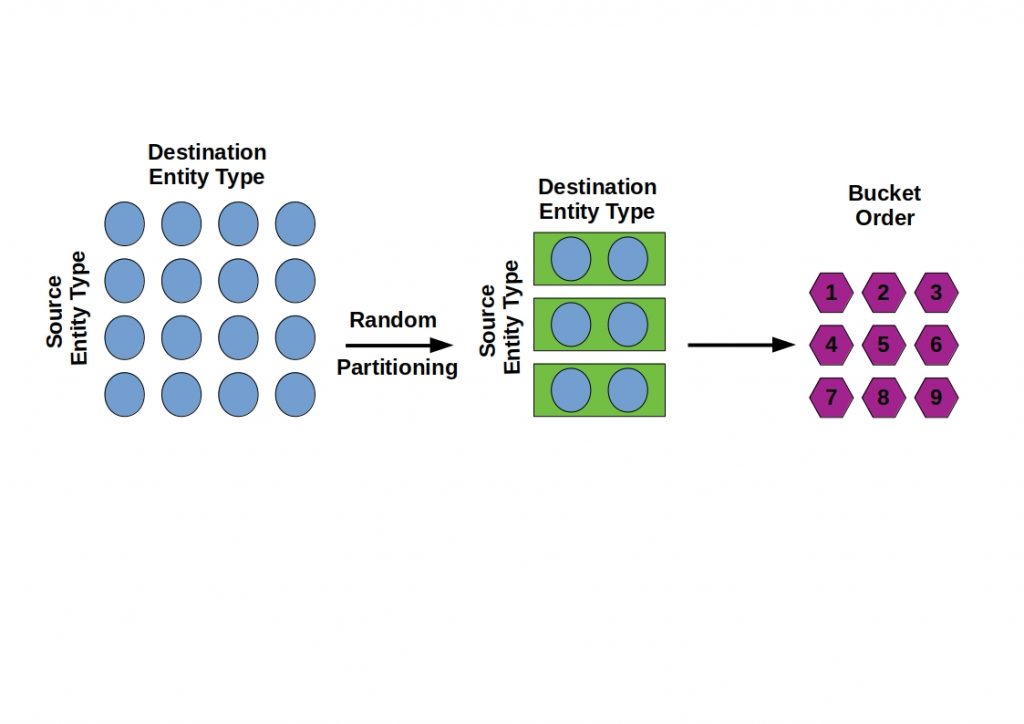

PGB provides some basic building blocks to handle the complexity of the graph. The graph partitioning splits the graph into equal parts and can be processed in parallel. PGB also supports multithreading computations. A process is divided into several threads, which run independently, but can access the same memory. In addition to the distribution of tasks, PyTorch BigGraph can also be used intelligently by distributed execution of hardware resources.

PyTorch BigGraph- How does the training work?

The PGB graph processing algorithms can process the graph in parallel using the fundamental building blocks already described. This allows the training mechanisms to run in a distributed manner and thus with high performance.

Once the nodes and edges are partitioned, the training can be performed for one bucket at a time.

The training runs unsupervised on an input graph by reading its edge list.

A feature vector is then output for each entity. Here, neighboring entities in the vector space are placed close to each other, while unconnected entities are pushed apart. Thus, the dimensions are iteratively reduced. It is also possible to configure and optimize this calculation using parameters learned during training.

PGB and Machine Learning

The graph structure is a very information-rich and so far unfortunately too much neglected data structure. With tools like PGB the large structure is more and more equalized by high parallelism.

A very interesting concept is the use of PGB in machine learning large graph structures. Here, the graph structures could be used for semantic queries with nodes, edges and properties to represent and store data and could replace a labeled data structure. Through the connections between the nodes certain relations can be derived. By PGB the graph can be processed enormously parallelized. This would allow individual machines to train a model in parallel with different buckets, using a lock server.

Leave a Reply