Apache Spark Streaming – Every company produces several million pieces of data every day. Properly analyzed, this information can be used to derive valuable business strategies and increase productivity.

Until now, this data was consumed and stored in a persistent. Even today, this is an important step in order to be able to perform analyses on historical data at a later date. Often, however, analysis results are desired in real time. Be it only reference values that have been exceeded.

So-called data streams, i.e. data that is continuously generated from thousands of data sources, can already be consumed before they end up in a persistence, without the flow rate being significantly reduced. It is even possible to train neural networks using such a stream.

In this article, we’ll tell you why you shouldn’t miss out on Apache Spark and Apache Spark Streaming if you’re planning to integrate stream processing in your organization.

Table of Contents

What is Apache Spark?

Apache Spark has become one of the most important and performant unified data analytics on the market today. The framework provides a total solution of data processing and AI integration. This allows companies to easily develop performant data pipelines and train AI methods using massive data streams.

Apache Spark combines several partially interdependent components. So can be deployed in a modular fashion to a certain extent.

Spark can run in its standalone cluster mode, on EC2, on Hadoop YARN, on Mesos or on Kubernetes.

The data here can come from streaming sources, such as Kafka, as well as static data sources. So far, the programming languages Java, Scala, Python and R are supported. These are currently the most commonly used languages across all scientific disciplines for implementing data analysis methods.

What does a Spark cluster look like?

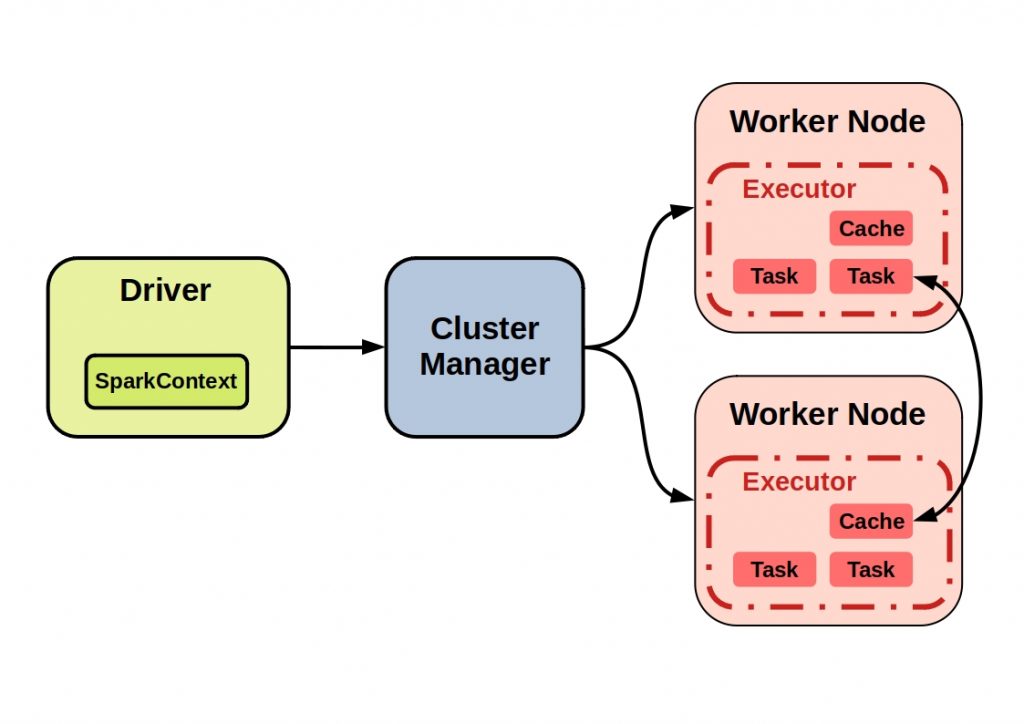

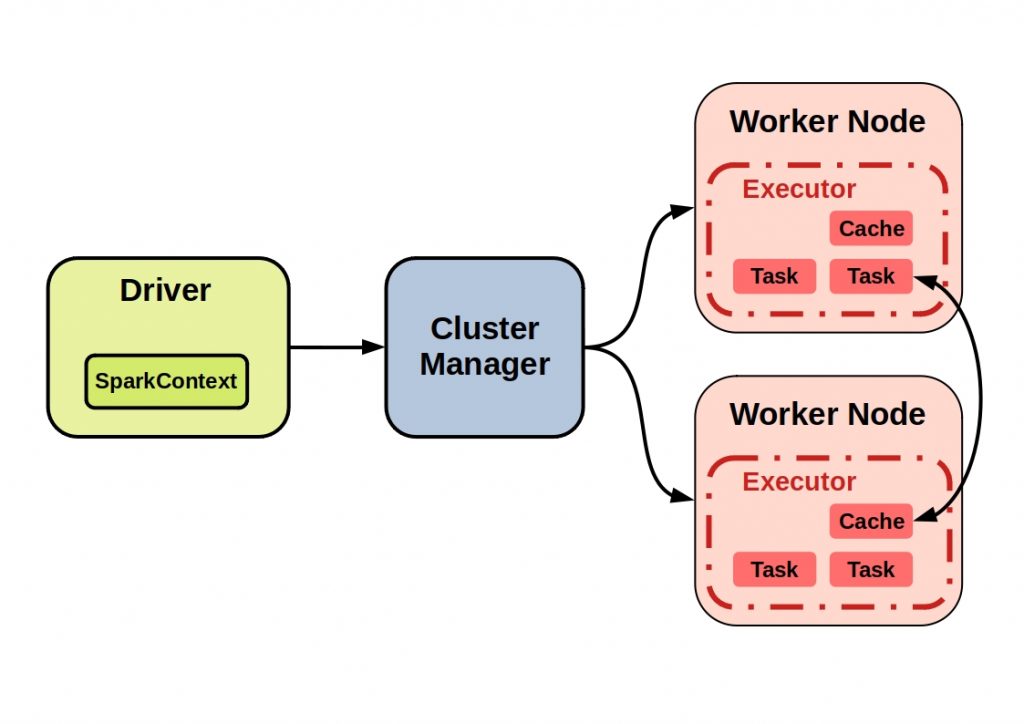

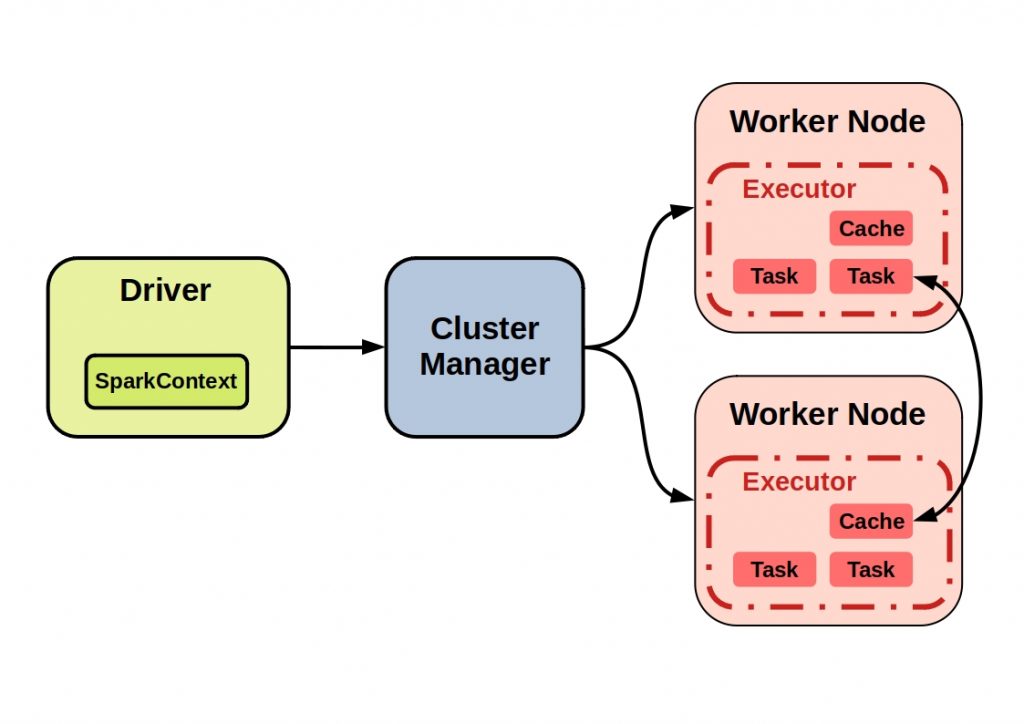

Spark applications run as independent sets of processes on a cluster.The coordinator of a Spark program on a cluster is the so-called SparkContext object. This controls the individual Spark applications as they run as independent processes.

The Coordinator then connects to the Central Element, a Cluster Manager, which then allocates resources to the individual applications.

The figure below shows an example of a typical Spark cluster with all its components.

The actual calculations and data storage then take place on the nodes. These processes, also called executors, then execute tasks and hold the data in memory or disk space. The cache can then be accessed by another node.

Apache sparks underlying technology – The key to high Performance

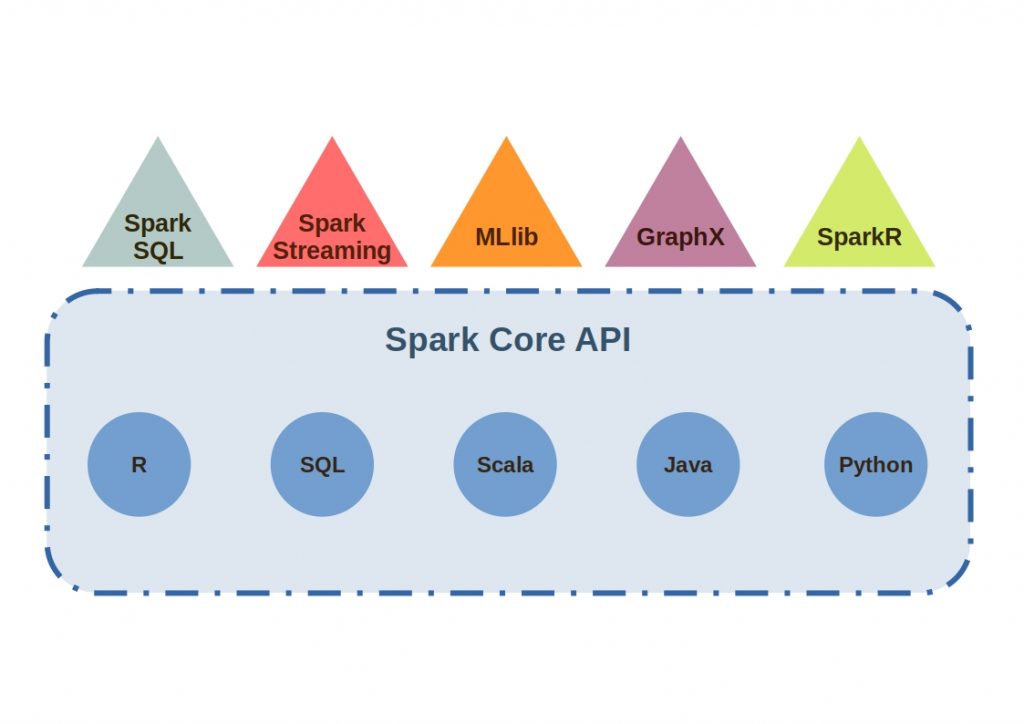

Spark Core is the underlying unified computing engine on which all Spark functions are built. It enables parallel processing even for large datasets and thus ensures very high-performance processes.

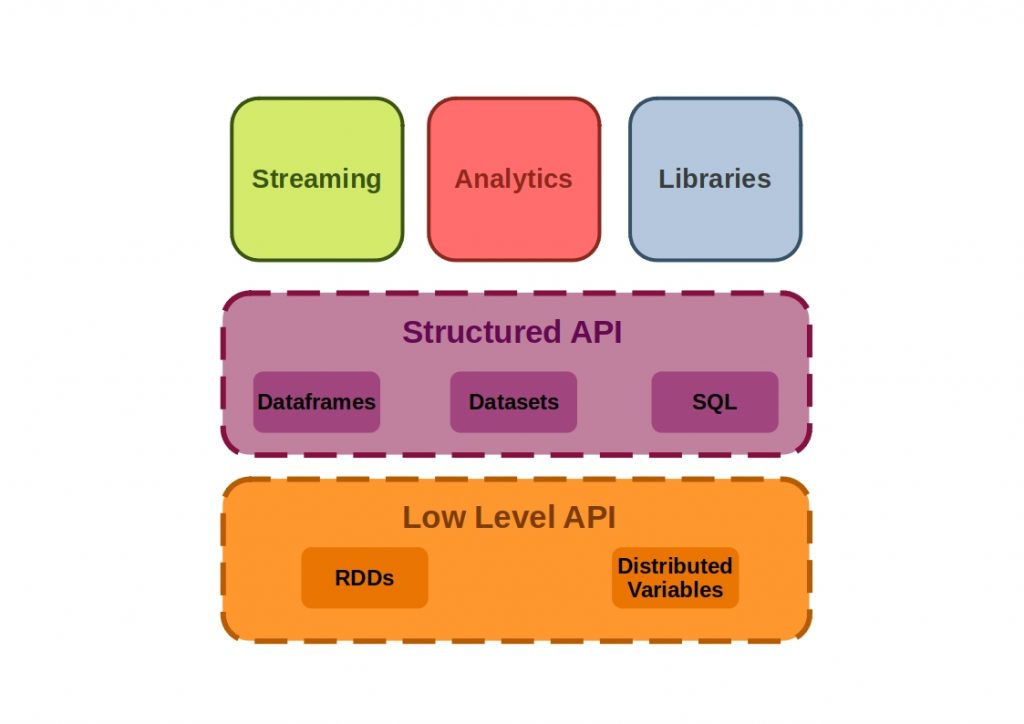

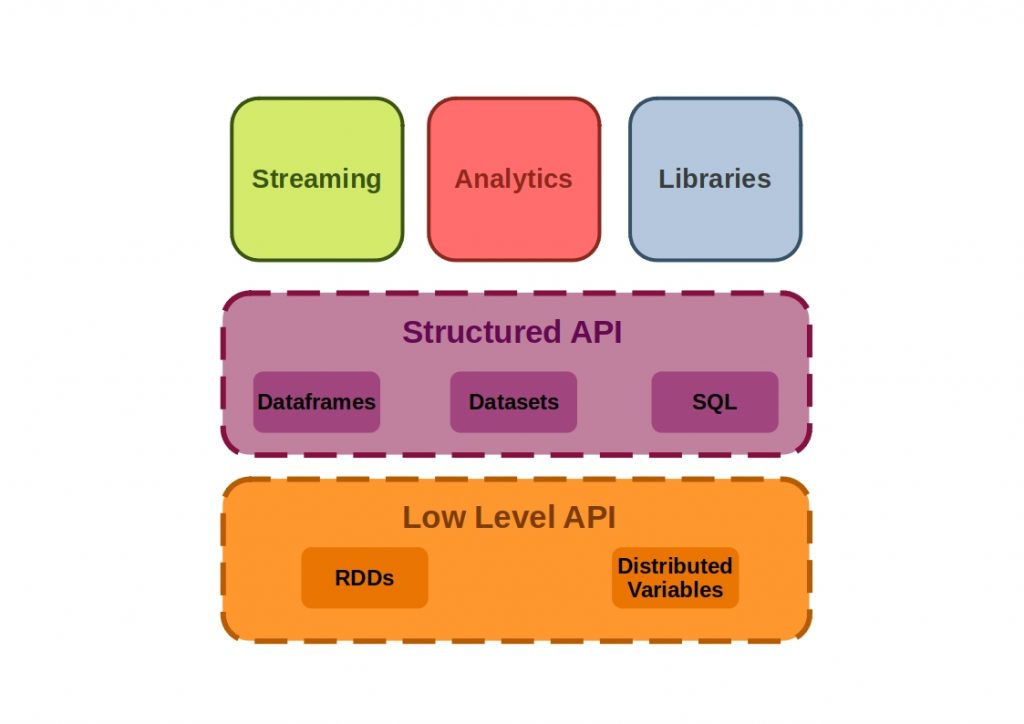

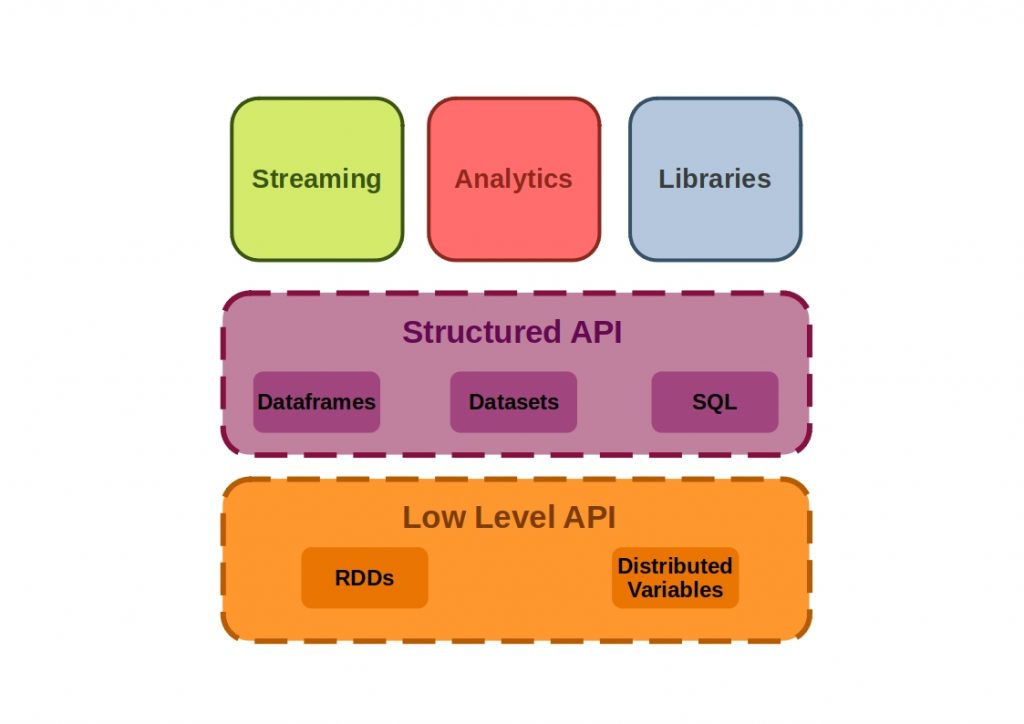

The following figure shows how the Apache Spark Core APIs are composed.

The core API consists of low level APIs, where object manipulation via Resilient Distributed Datasets (RDDs) takes place and structured APIs, where all data types are manipulated and batch or streaming jobs take place.

How do the individual Apache Spark APIs work?

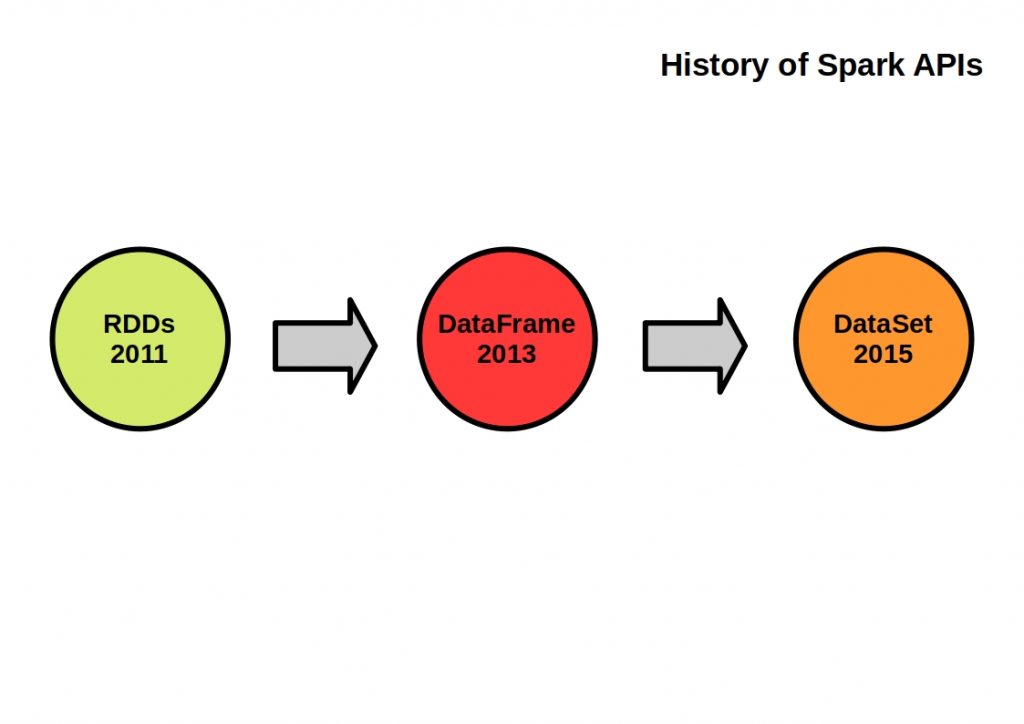

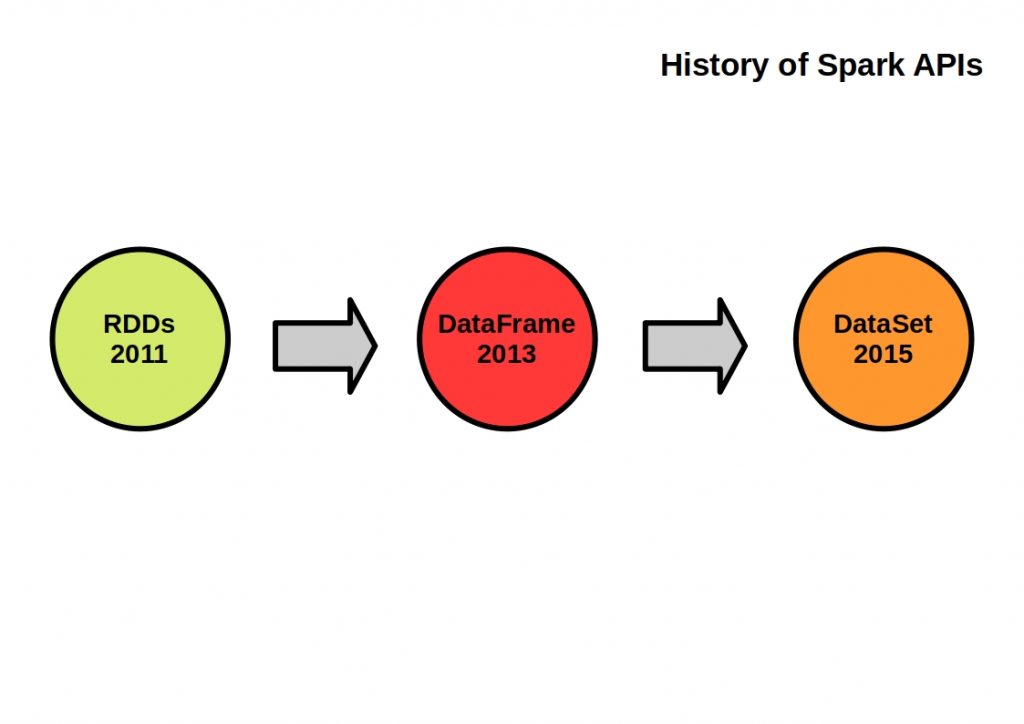

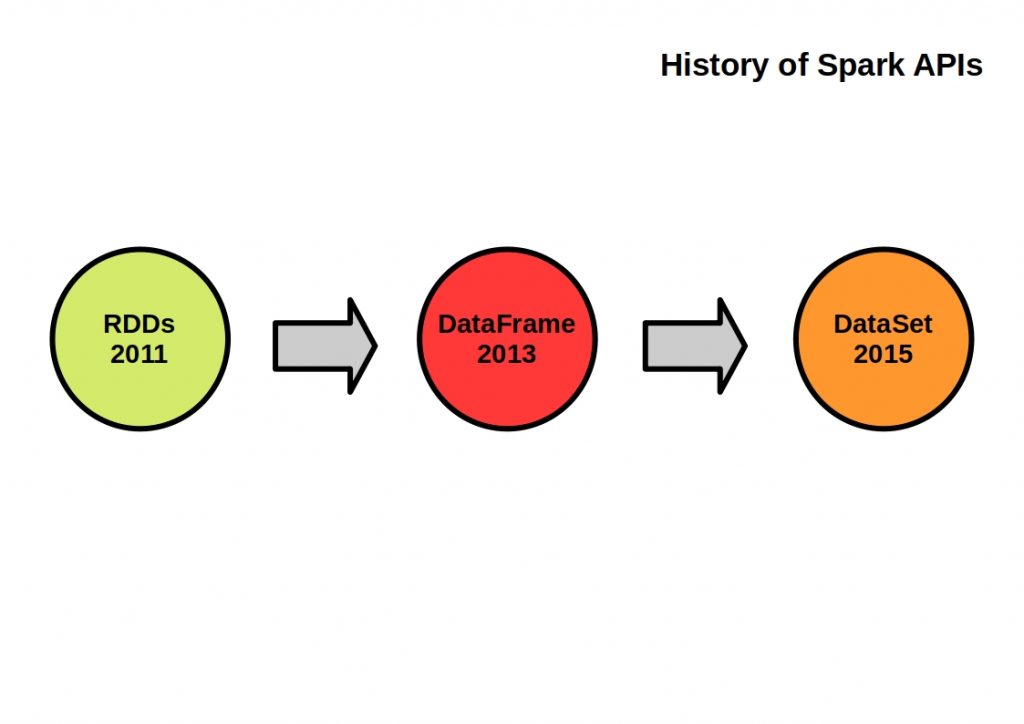

In order to properly understand the API structure, its components must be placed in a historical context.

What is the RDD API?

The RDD (Resilient Distributed Dataset) API has been implemented since the first Spark release and is based on the Scala collections API.

RDDs are a set of Java or Scala objects that represent data and thus are the building blocks of Spark. They excel in being compile-time type-safe and inert.

All higher level APIs can be decomposed into RDDs. Various transformations can be performed in parallel using this API. Each of them defines an operation to be executed, which is invoked by calling an action method and creates a new RDD. This then represents the transformed data.

What is the Dataframe API?

The Dataframe API introduces a higher level abstraction. Spark dataframes correspond to the Pandas dataframes structure. They are built on top of RDDs and represent two-dimensional data and a schema. It contains an ordered collection of columns and each different column can consist of different data types. Each value is unique by a row and a column index.

When data is transferred between nodes, only the data is transferred. The metadata is managed in a schema registry separate from spark. This has significantly improved the performance and scalability of Spark.

The API is suitable for creating a relational query plan. Thus, manipulation of data can now be done using a query language.

What is the Dataset API?

When working with dataframes, compile-time type safety is lost. This is a strength of the RDD API. The Dataset-API was created to combine the advantages of both APIs. It is thus the second most important Spark API next to the RDD API.

The basis of this API are integrated encoders, which are responsible for the conversion between JVM objects and the internal Spark SQL representation.

What components does Apache Spark consist of?

Spark is modularly extensible through the use of components. Spark includes libraries for various tasks ranging from SQL to streaming and machine learning. All components are based on the Spark Core, the foundation for parallel and distributed processing of large data sets. How this API looks in detail and what makes it so performant, we will explain later.

The following figure lists the individual Apache Spark components.

Apache Spark Spark SQL

With this component RDDs are converted into the so-called data frames, i.e. provided with metadata information.

The whole thing is done by a catalyst optimizer, which executes an execution plan in the form of a tree.

Apache Spark GraphX

This framework can be used to perform high-performance calculations on graphs. These operations can run in parallel.

Apache Spark MLlib/SparkML

With the MLlib component, machine learning pipelines can be constructed very easily. For this purpose, ready-made models and common machine learning algorithms (classification, regression, clustering …) can be used. Thus, data identification, feature extraction and transformation are combined in a unified framework.

Apache Spark Streaming

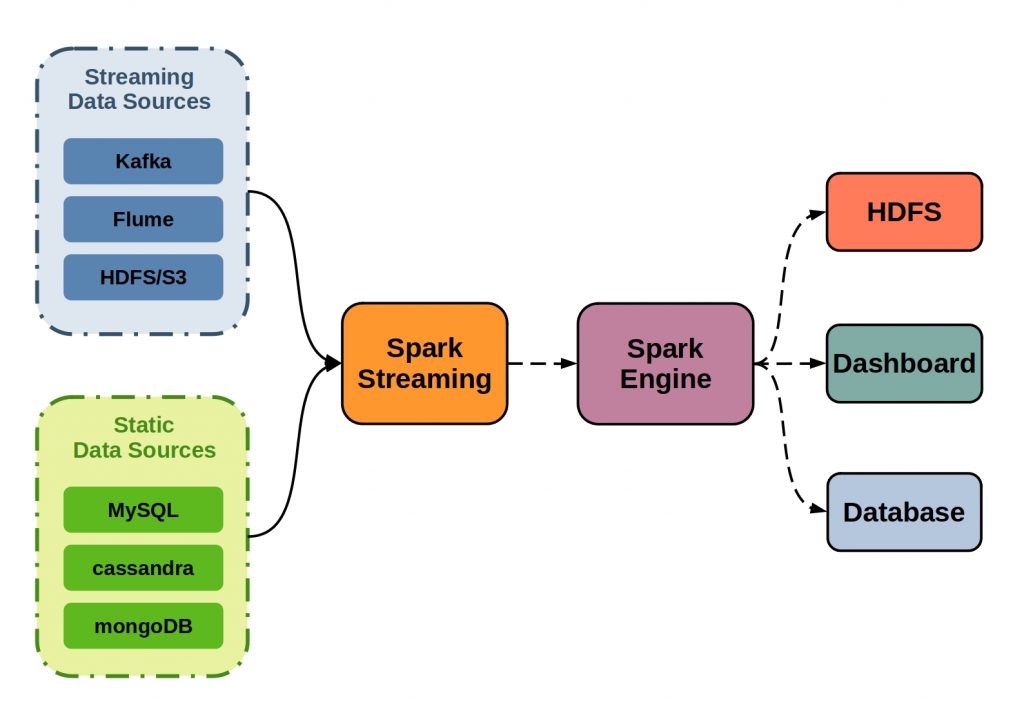

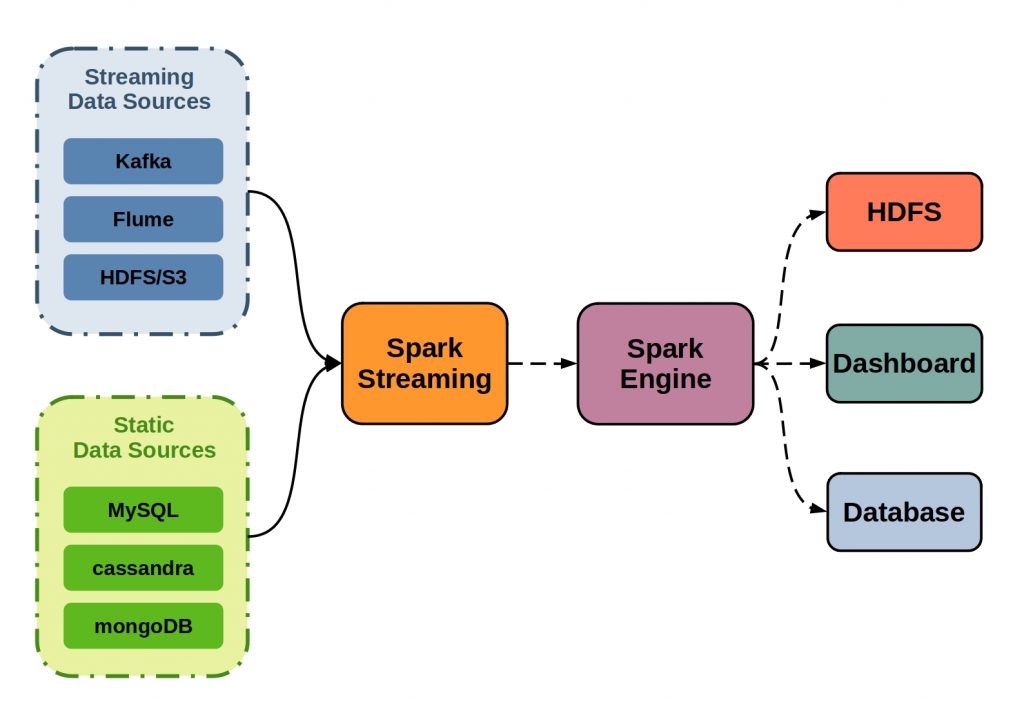

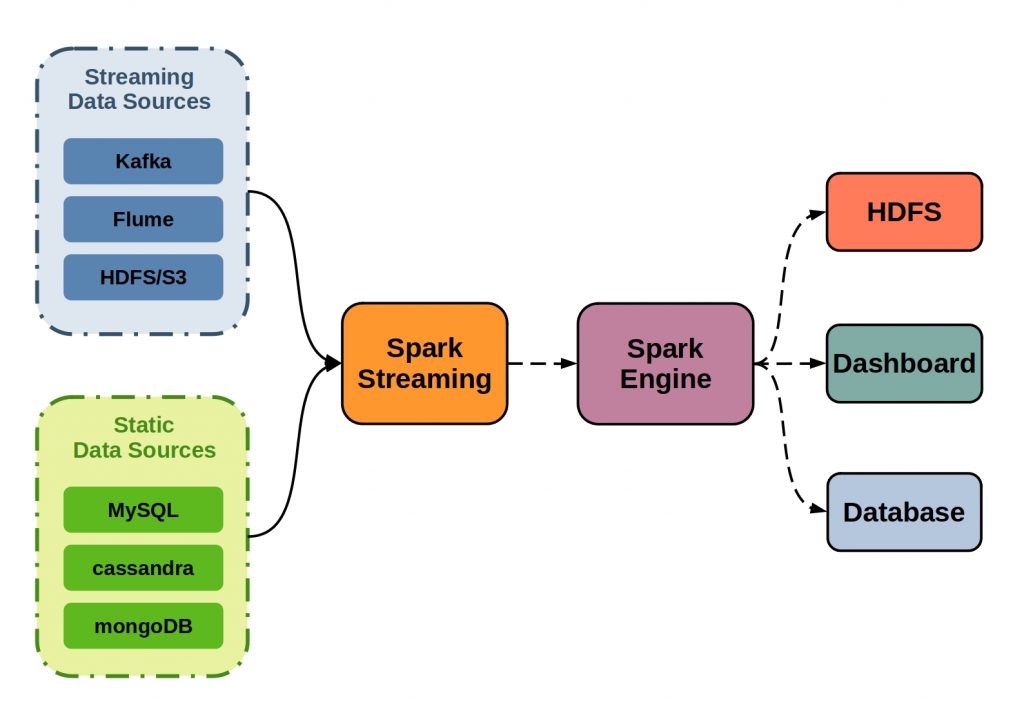

Apache Spark Streaming enables and controls the processing of data streams. However, Apache Spark Streaming can also process data from static data sources.

In the case of datastreaming, input stream goes from a streaming data source, such as Kafka, Flume or HDFS, into Apache Spark Streaming.

There, it is broken into batches and fed into the Spark engine for parallel processing. The final results can then be output to HDFS databases and dashboards.

The following figure illustrates the principle of Apache Spark Streaming.

All components can consume directly from the stream via Apache Spark Streaming. This component takes a crucial role here. It coordinates the requests via sliding window operations and regulates the data flow. Since all components are based on the Spark Core API, absolute compatibility is guaranteed. Especially in the Big Data area, this can deliver a decisive performance bonus.

0 Comments

1 Pingback